In the last section we showed that the variance in a sample average decreases as the number of samples increases, provided the samples are sufficiently uncorrelated. As a consequence, sample averages of sufficiently uncorrelated variables are consistent estimators of unknown expectations. We established consistency in the sense that the expected square error converges to zero in the limit as the number of samples diverges.

In this section, we will show that, when the variance in a random variable converges to zero, then the distribution of the random variable must concentrate about its expectation.

Motivation¶

To motivate this effort, consider the following setting:

You can observe drawn jointly and for all . This occurs if the samples are drawn identically.

You don’t know , but want to estimate it. So, you estimate it with the sample average . You adopt the sample average since it is an unbiased estimator.

The samples are sufficiently uncorrelated so .

You don’t need an arbitrarily accurate estimator, but do want your estimates to be sufficiently accurate. For example, maybe you want to guarantee that the error in your estimate will be less than some threshold, . That is, you’d like to guarantee that for some . For example, you might demand that your estimates are accurate to within a tolerance of .

Since the estimates depend on random sample, they are random. We’ve assumed nothing about the actual distribution of the ’s, so we can’t work out the distribution of , let alone its support. It is too much to hope that we can provide any error guarantee with total certainty. However, we might look for a probabilistic guarantee. Something like, “if you use at least 100 samples, then the chance that your error is greater than the tolerance , is less than 1 in 1,000.” If you could prove this guarantee, then you could argue that, there is a 99.9% chance that the error will satisfy the bound .

A bound of this kind is an example of a tail bound. Tail bounds are bounds on tail probabilities (see Section 5.1).

Good tail bounds are very useful pieces of math. They enable analysts to make sharp probability statements without demanding exactly specified models. By trading off the amount of information used to inform the bounds, we can balance between specificity and generality.

Tail bounds are useful when:

We don’t have enough information to find the exact distribution of so can’t work out its cumulative distribution function or survival function,

The distribution of is hard to work with, so exact calculation of tail probabilities is cumbersome, or

We are looking to make a statement that holds over a range of different distributions.

In all three cases we pursue a bound, instead of an equality since an equality (exact tail probabilities) would require exact knowledge of the distribution, an exact calculation, and, would only apply to that specific distribution. In general, the more information we use when forming our bound, the stronger the bound. There is a trade-off here. The more information the bound demands, the more we need to know about the underlying distribution, the more involved the calculation, and the fewer distributions it applies to.

The first and third motivations apply in our estimation setting. Since we don’t know the distribution of the ’s, we can’t work out the exact distribution of their sample average. Moreover, we saw in the last section that sample averages are consistent estimators for unknown expectations under extremely weak conditions on the distribution generating the samples. As a consequence, sample averages were consistent estimators for a wide variety of distributions. We ought to be able to provide a tail bound, at least in the limit of large , that holds for a similarly wide range of distributions.

Returning to our estimation problem, we’d like a statement of the kind:

where the failure probability is some function of the precision we demand, , and the number of sampled we collect, . If we demand higher precision, while holding the failure probability fixed, or a smaller failure probability for a fixed precision, then we should need larger .

Reasoning about the relationship between a error tolerance, failure probability, and sample size, is a practical exercise. Since sample collection is often expensive, we often want to know the smallest sufficient sample size needed to guarantee accuracy. Alternately, if the sample size is fixed, then tail bounds allow the analyst to bound the confidence they should place on their estimates, or to find the highest precision statement available with a given failure probability.

We will explore two tail bounds in this section.

Markov’s Inequality¶

We’ll start with the simplest tail bound. This bound is not so useful by itself, since it is very loose. In most cases it grossly overestimates the true tail probability. So if used to inform an estimation policy it would usually produce far too conservative suggestions. However, we can modify it to derive more useful inequalities.

Our first bound formalizes the idea that, if is nonnegative, then it should be unlikely to see much larger than .

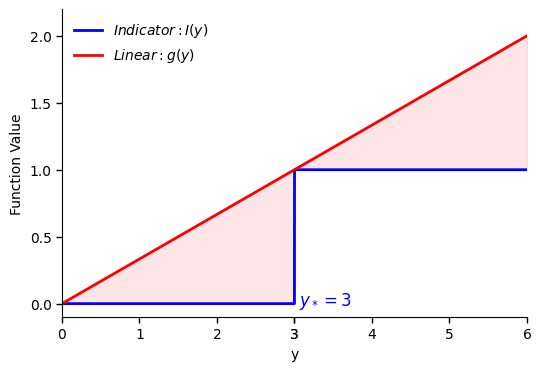

Consider the event . Let be an indicator function for the event . Then if and if . So, as a function of , is a step function. It is zero for all then jumps to 1 for all .

Recall that, the expected value of any indicator variable is the chance of the event it indicates (see Section 4.2). Therefore:

So, to bound the tail probability, we can bound the expected value of the indicator instead.

Consider the linear function that equals 0 at 0, and passes through the point , where the indicator function jumps from 0 to 1. This is the function:

The figure below illustrates the indicator function (blue) and the line (red) for . The shaded red region highlights the difference between these two functions. Notice that for all .

Since , its expected value must also be greater than or equal to the expected value of the indicator. Therefore:

Simplifying:

Therefore:

Sometimes it is helpful to think about Markov’s inequality as follows. Set for some . Then, Markov’s inequality says that:

In other words, the chance that a nonnegative random variable is times larger than its expected value is less than or equal to . So, the chance that is more than twice as large as its expectation is less than or equal to . The chance it is more than five times as large as its expectation is less than or equal to .

Here’s a table for different :

| 2 | 3 | 4 | 5 | 6 | 7 | ... | 10 | |

|---|---|---|---|---|---|---|---|---|

| ... |

Pros:

Markov’s inequality demands very little information. All you need to know is:

that your random variable is nonnegative and,

its expected value.

Cons:

Markov’s inequality only applies to nonnegative random variables.

Markov’s inequality provides a very loose bound for most distributions.

The latter con is fatal. Since the bound decays at rate , it only returns a small failure probability for very large . In most settings, it is very unlikely to see a random sample 4 times larger than its expectation, yet Markov would only bound the probability at 0.25. To find a bound whose failure probability is , we needed to increase all the way to 10! Only very heavy tailed, or heavily skewed, distributions produce samples 10 times larger than their expectation 10% of the time.

Here’s an example. Suppose that . Then is nonnegative, so Markov’s inequality applies. The expected value of is .

We worked out the exact tail probabilities of a geometric random variable in Section 5.1. The exact tail probabilities are:

Compare the exact tail probabilities to the Markov bound in the table below. Markov’s inequality provides valid bounds, but the bounds are extremely conservative:

| 1 | 2 | 3 | 4 | 5 | 6 | 7 | ... | 10 | |

|---|---|---|---|---|---|---|---|---|---|

| 1 | 1 | ... | |||||||

| ... |

The upper bound produced by Markov’s inequality is 200 times too large for .

Chebyshev’s Inequality¶

Markov’s inequality is very loose since it doesn’t use much information about the distribution. We can find tighter bounds by using more information. Let’s look for a two-sided tail bound that uses the variance to inform the bound.

A two-sided bound is a statement of the kind:

Notice, while may not be nonnegative, the random variable is nonnegative. So, Markov’s inequality applies:

The expectation in the numerator is the mean absolute deviation (MAD) in the random variable (see Section 4.3). So:

We can replace the mean absolute deviation with the variance in by replacing the absolute deviation, , with the squared deviation, . We’ll replace the absolute deviation with the squared deviation since the resulting bound decays more quickly as a function of once is large. As a reuslt, it is less conservative when we demand a very small failure probability. It is also easier to work with since we have stronger results for variances than mean absolute deviations. The bound using the mean absolute deviation is more useful for small .

To replace the absolute deviation with the squared deviation, notice that if and only if . Therefore:

The squared deviation is a nonnegative random variable, so Markov’s inequality applies:

The expectation in the numerator is the expected square deviation, so is the variance in . Thus:

This is Chebyshev’s inequality.

Like Markov’s inequality, Chebyshev’s inequality only gives an informative bound for , or, for .

Chebyshev’s inequality is more useful than Markov’s since it applies to any random variable, gives a two-sided bound, and the bound decays faster as a function of . Markov’s inequality produced a bound that decayed at rate . Chebyshev’s decays at rate .

Here’s a table for different :

| 2 | 3 | 4 | 5 | 6 | 7 | ... | 10 | |

|---|---|---|---|---|---|---|---|---|

| ... |

Notice how much faster the bound decays.

Pros:

Chebyshev’s inequality demands very little information. It only needs the variance in the random variable.

Chebyshev’s inequality is tight. If the only information we know about a random variable is its variance, then there is no bound smaller than the Chebyshev bound that would apply to all distributions.

Cons:

Because Chebyshev’s inequality is tight, it has to apply to all possible distributions with a given variance. As a result, it is very conservative, and is often quite loose for specific distributions. In many cases, we could find significantly smaller bounds if we used additional information. In particular, Chebyshev tends to produce overly conservative estimates if we demand a very small failure probability.

Because the right hand side of Chebyshev’s inequality is proportional to the variance, decreasing the variance lowers the value of the bound. Therefore, if the variance in the random variable converges to zero, then the chance it differs from its mean by any fixed threshold also converges to zero.

Other Bounds

The strategy we used to derive Chebyshev’s inequality would apply for any function that converts into a nonnegative random variable. In particular, we could derive two-sided tail bounds using:

for any nonnegative function that is monotonically increasing. For example:

This produces a bound that decays at rate . So, by increasing we can produce a bound that decays faster. There is no free lunch, since increasing makes grow faster as a function of , so increases the numerator.

Here’s a different example, try for some . Then:

This bound decays exponentially in . We can make it decay faster by increasing . As before, there is no free lunch. Increasing makes grow faster as a function of , so increases the constant in the numerator.

The best bound to use depends on which expectations we can compute, whether we want to optimize the rate at which the bound decays in the tolerance , or whether we want a small constant in the numerator. In practice, if we don’t need a very small failure probability, or want a bound that applies for small tolerances, then we should use a function that grows slowly in . If we need a very small failure probability, or want a bound that applies for large tolerances, then we should use a function that grows quickly in . If we can compute multiple bounds, then we should always pick the smallest and use it as our bound.

This is the idea behind Chernoff’s inequality, which says that:

Chernoff’s inequality is quite powerful since, there are a variety of important scenarios where we can compute the expectation as a function of , and the resulting bound can decay quite rapidly as a function of . For example, you could use Chernoff’s inequality to show that the survival function of a normal random variable decays faster than exponentially.

If you’d like to learn more about tail bounds, come talk to your professor, or take a second course in probability!

Application¶

Let’s apply Chebyshev’s inequality to our original problem.

Suppose that the random variables are drawn identically, with known . Then:

Suppose that the random variables are uncorrelated, then (see Section 13.1):

So:

Pay attention to the three terms in this bound.

Increasing decreases the bound for a fixed tolerance since the sample average becomes a more accurate estimator as we use more samples.

Decreasing the tolerance (demanding higher accuracy) decreases the chance the error is smaller than the tolerance, so increases the bound on the failure probability. This is an application of the inclusion bound from Section 1. All estimates within 0.01 of the true value are also within 0.1 of the true value, so we are more likely to see an estimate within 0.1 than 0.01.

Lastly, the term shows how the bound depends on the distribution of the samples. The larger the variance in the samples, the less accurate the estimator, so the larger the failure probability.

Let’s use this equation to find a sufficient sample size.

Suppose that the standard deviation in each sample is 5, we want our estimates to be accurate within 0.5, and we want our bound to fail with chance at most 0.05. Then , , and . Then, we should find the smallest such that:

This would require:

So, the minimum sample size necessary to ensure that the sample average is within 0.5 units of the true mean, with probability at least 95%, is less than or equal to 2,000.

Suppose we had only demanded that our bound hold 90% instead of 95% of the time. Would the necessary sample size increase or decrease?

Solution

If we allow more failures, then we don’t need as many samples. In particular, if we only wanted the bound to hold with chance 90%, then we’d have used , so would have needed at most .