In Section 5.2 we introduced methods that compare the rates at which different sequences converge. In Section 5.3 we showed that the rate at which a sequence converges determines whether or not sums of terms in the sequence (e.g. series) converge.

In this section we will apply the language developed in Section 5.2 to sort distributions by their tail decay rates. We will work from distributions that decay slowly to distributions that decay quickly. The integral convergence test established in Section 5.3 guarantees that the convergence arguments developed for series will extend to integrals.

Superexponential (Heavy) Tails¶

A distribution has heavy tails if it decays slowly.

In particular, a distribution has power law type tails if, for large , it decays proportionally to for some . We need when the random variable is unbounded, otherwise the distribution cannot be normalized.

Examples:

Discrete Power Laws:

, for .

Examples include word frequency in natural language, and degree distributions (number of connections) in random networks (e.g. social networks).

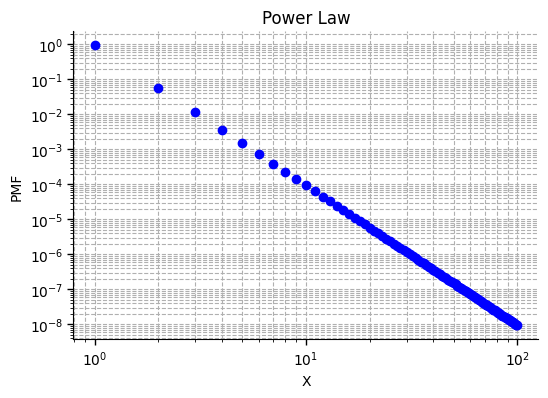

Here’s an example with power on a log-log plot. Notice that, on the log-log plot, the power law is a straight line, and its slope equals the power, -4. Markers denote specific values of the PMF sequence .

You can experiment with discrete power law distributions using the Distribution Tail Explorer, or by running the code cell below. Try switching the and axes to log scales. You should see that, on a log-log scale, the bar plot follows a line, whose slope becomes more negative as you increase the power of the power law.

from utils_dist_5_1 import run_distribution_explorer_51

run_distribution_explorer_51(dist_type="Power law", lock_distribution=True)Pareto Distributions:

for some , for some .

Examples include income and wealth distributions.

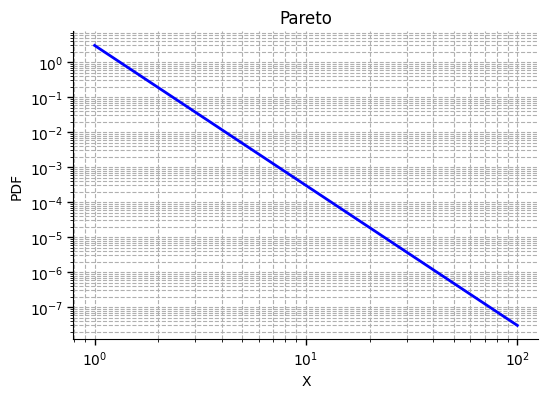

Here’s an example with parameters , and .

Since , the PDF decays according to a power law with power . Notice that, on the log-log plot, the PDF is a straight line, and its slope equals the power, -4. Notice that the Pareto density and the power law PMF are identical functions, but the Pareto is interpreted as a density for a continuous variable so is evaluated on all

You can experiment with Pareto distributions using the Distribution Tail Explorer or by running the code cell below. Try switchin to a log-log scale and varying the parameters.

from utils_dist_5_1 import run_distribution_explorer_51

run_distribution_explorer_51(dist_type="Pareto", lock_distribution=True)Student’s t-Distributions:

for . In this case the tails decay proportionally to so .

Examples include estimated signal to noise ratios commonly used in hypothesis testing.

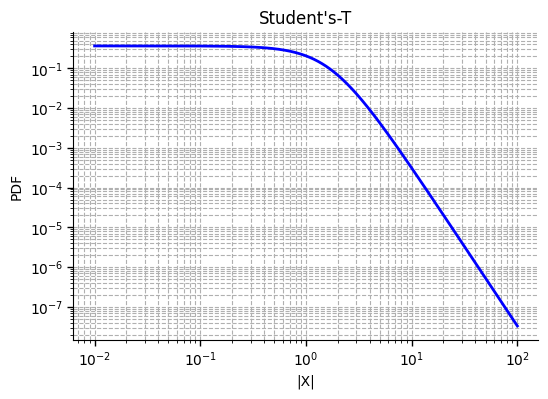

Student’s t distributions are bell-shaped, but have slowly decaying tails. Here’s a log-log plot showing the student’s-t density as a function of with .

The density is an even function, so it behaves symmetrically for negative . When the tails decay according to a power law with power 4, so on a log-log plot the density approaches a line with slope -4 when is large.

You can experiment with distributions using the Distribution Tail Explorer or by running the code cell below. This time, just use a log plot for the axis since is undefined for negative . Try increasing the free parameter that controls the shape of the distribution. Think about how controls the rate at which the tails decay. When is large, the log-plot will appear quadratic.

from utils_dist_5_1 import run_distribution_explorer_51

run_distribution_explorer_51(dist_type="Student-t", lock_distribution=True)To detect power law tails, plot the log of the PMF (or PDF) against the log of . If the resulting plot approaches a line for large , then the distribution has power-law type tails.

Power law tails may converge slowly, especially when the power, is close to 1. The smaller the power, the slower they converge. The larger the power, the faster the tails converge.

If then the distribution exists but has infinite expected value. If then the distribution exists and has a finite expected value, but has infinite variance and standard deviation. If then the distribution exists and has both finite expectation and finite variance.

Exponential Tails¶

A distribution has exponential tails if it decays at the same rate as an exponential function, for some . That is, if the tails are for some .

Examples:

Geometric Distributions:

, for some .

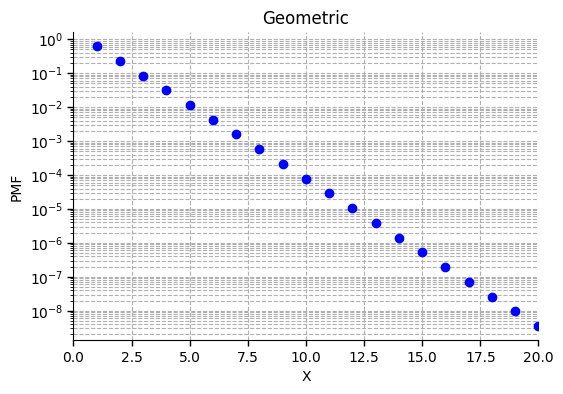

Here’s an example geometric distribution.

We’ve used a log scale for the vertical axis (log of PMF) and a linear scale for the axis, so that the geometric sequence forms a line with slope equal to . Markers denote the sequence of PMF values for integer .

You can experiment with geometric distributions using the Distribution Tail Explorer or by running the code cell below. Try switching to a log scale. Leave on a linear scale. How does the rate of tail decay depend on the success probzability ?

from utils_dist_5_1 import run_distribution_explorer_51

run_distribution_explorer_51(dist_type="Geometric", lock_distribution=True)Exponential Distributions:

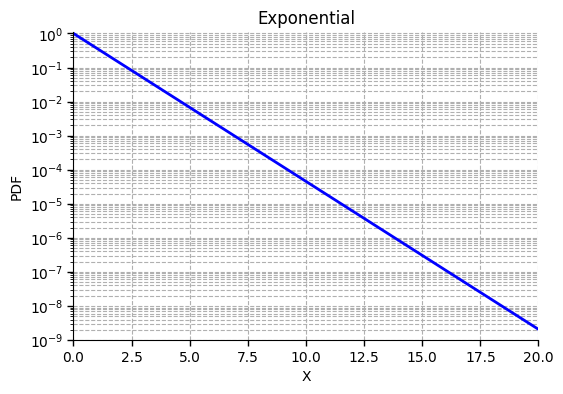

, for some .

Exponential distributions are often used to model continuous waiting times (see Section 6.2).

Here’s an example exponential distribution.

We’ve used a log scale for the vertical axis (log of PMF) and a linear scale for the axis, so that the exponential PDF forms a line. Notice that, as the Pareto distribution is a to a discrete power law distribution, the exponential distribution is to the geometric distribution.

You can experiment with exponential distributions using the Distribution Tail Explorer or by running the code cell below. Try switching to a log scale. Leave on a linear scale. How does the rate of tail decay depend on the parameter ?

from utils_dist_5_1 import run_distribution_explorer_51

run_distribution_explorer_51(dist_type="Exponential", lock_distribution=True)To detect exponential tails, plot the log of the PMF (or PDF) as a function of (not the log of ). If the log of the PMF (or PDF) approaches a line using a log scale on only the vertical axis, then the distribution has exponential tails.

Subexponential Tails¶

Examples:

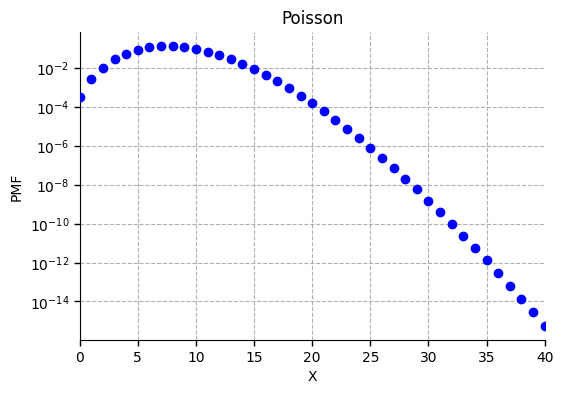

Poisson Distributions:

for some .

Poisson distributions occur naturally in problems involving counts of rare phenomena, or of events that occur randomly in time.

In this case the tails decay faster than exponential since grows very quickly as a function of . To a good approximation, so converges to zero faster than . As increases, the fraction decreases, so the base of the exponent is vanishing while the exponent is also diverging.

You can experiment with Poisson distributions using the Distribution Plotter introduced in Section 2.5.

Here’s an example Poisson PMF with parameter plotted using a log scale for the mass function and a linear scale for the input . In this case the tail corresponds to large .

Notice that, the log PMF is a concave function of , so accelerates downwards as increases. As a result, the slope of the log PMF becomes more negative as increases, so the PMF converges faster than any exponential, which would form a line on the log PMF plot.

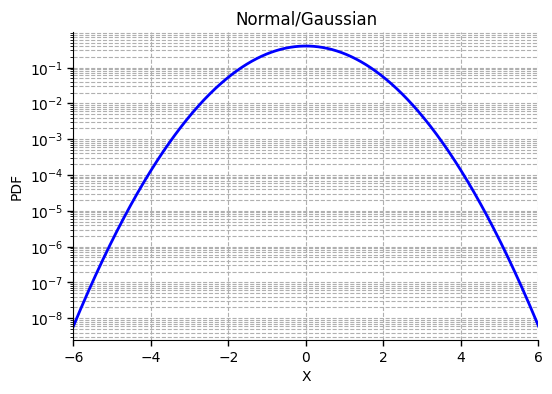

Normal (Gaussian) Distributions:

Normal, or Gaussian, distributions are the most widely used distributions in statistics. They define the classical bell-curve. They appear anytime we consider sample averages, or random numbers that are produced by sums of many independent and identical random variables. They are extremely common references for problems involving large sample sizes and estimates from large data sets. They are fundamental in the physical sciences, financial modeling, and a wide range of probability problems. Normal distributions have extremely light tails. They decay very quickly. The tails of the normal distribution are faster than exponential:

since dominates for large .

You can detect Gaussian-type tails by plotting the log of the distribution against . If the log of the distribution approaches a quadratic function for large , then the associated tail decays at a Gaussian rate, and is both subexponential (faster than exponential), and subpoisson (faster than Poisson).

Here’s a standard example. The log PDF, as a function of , is simply the quadratic function .

You can experiment with Normal distributions using this Distribution Tail Explorer or by running the code cell below.

from utils_dist_5_1 import run_distribution_explorer_51

run_distribution_explorer_51(dist_type="Normal", lock_distribution=True)