A variety of problems in probability and statistics involve integrating over a connected subset of points in a multi-dimensional space. This chapter will focus on two examples.

Suppose that are random variables. Let . How is distributed?

Why sums?Finding the distribution of a sum of random variables is very important for the study of averages. For example, suppose that are drawn identically and independently from some distribution with an unknown expectation . Then it is standard practice to estimate the unknown with the sample average . For example, in Homework 11 you saw that the best fit mean of a normal distribution was the average of a collection of samples.

The sample average depends on random samples, so is a random variable. Its distribution is determined by the distribution of the sum, . So, to study the distribution of sample averages, we will start by studying the distribution of sums. The simplest case is a sum of the form .

The theory of sums of random variables is quite deep. In this chapter we’ll set up some of the foundational mathematics needed to understand distributions of sums. We’ll revisit these ideas at the end of the course when we study concentration phenomena.

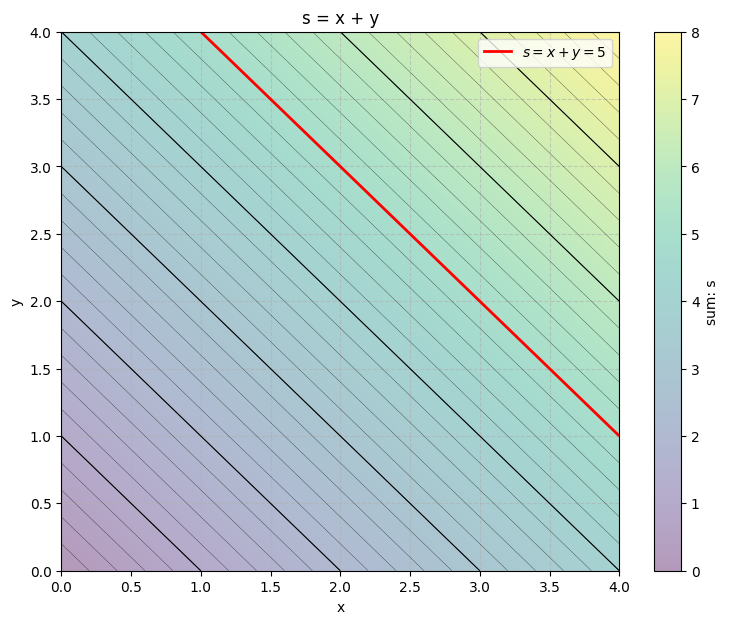

To find the distribution of the random variable , we will need to understand sums/integrals over the lines , since, if , then . By studying sums/integrals over lines we will learn how to apply a convolution.

Suppose that are random variables. Let . How is distributed?

Why sums of squares?Finding the distribution of a sum of squares of random variables is important for estimating unknown variances. For example, in Homework 11 you saw that the best fit variance of a normal distribution was the related to a sum of the samples squared. Sums of squares are also important if we want to understand the lengths of random vectors.

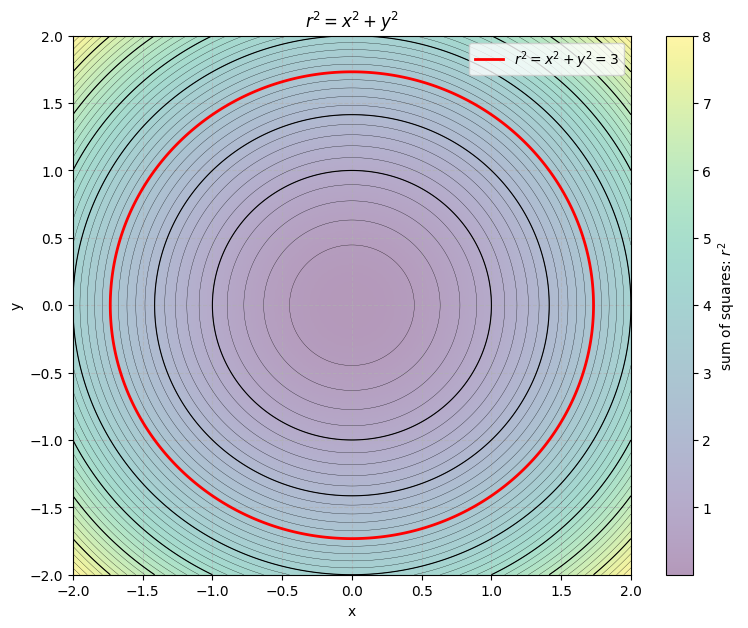

To find the distribution of a sum of squares of random variables, , we will need to understand sums/integrals over the circles, . By studying sums/integrals over circles we will learn to integrate in polar coordinates. We’ll apply our knowledge to work out the normalizing constant of the normal distribution and to find the distribution of the sum of squares of two normal random variables.

Each of these problems involve summing/integrating over a subset of points in a plane. In the first case we need to run sums/integrals over lines. In the second, we need to run sums/integrals over circles. Lines and circles are examples of manifolds.

For example:

The set of all such that is a manifold. It is equivalent to the line .

The set of all such that is a manifold. It is equivalent to the circle with radius .

The set of all such that is a manifold. It is equivalent to an ellipse.

The set of all such that is a manifold. It is equivalent to the curve .

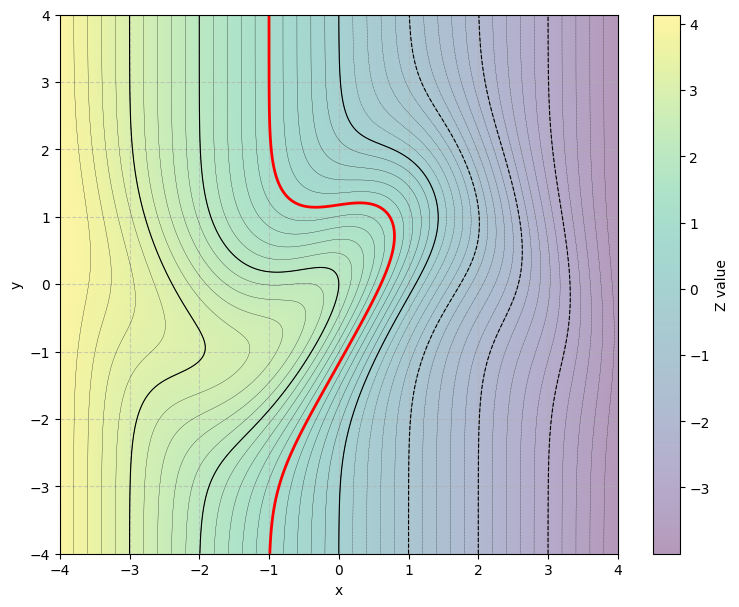

In general, if we define a random variable, , then the set of where will correspond to a level set of . If is some smooth, scalar-valued function, then its level sets are usually manifolds, or unions of finitely many manifolds. To find the distribution of we will either need to find its mass function, density function, or cumulative distribution function. In each case, we will want to understand the chance that , or . These chances will require integrating or summing over the level sets of . Thus, they will usually require integrating or summing over some collection of manifolds.

The figure below shows an example manifold in red. It is produced by taking a level set of a scalar valued function of and (shown as contours in black with a heatmap to illustrate the function value).

Sums of Random Variables¶

Suppose that and are jointly distributed, discrete random variables. Let . What is ?

Consider all the pairs such that . Since , the collection of all pairs such that is the set where . The figure below shows, in red, the set of all and such that if and . Notice that, the manifold corresponds to the line , and is a level set of the scalar valued function .

So, to find the chance that , we should take a union over all pairs of the form . Since this union runs over distinct we can use additivity:

We’ve run calculations like this before (see Section 2.1). For example, suppose that you roll two fair, six-sided, die. Let and denote the values rolled on each die. Let . How is distributed? Try to find the distribution of yourself using a joint distribution table. Then, open the dropdown below

Solution

Suppose that you roll two fair die, and the die are distinguishable. Then there are 36 possible joint outcomes, and the joint events table is:

| Roll | 1 | 2 | 3 | 4 | 5 | 6 |

|---|---|---|---|---|---|---|

| 1 | (1,1) | (1,2) | (1,3) | (1,4) | (1,5) | (1,6) |

| 2 | (2,1) | (2,2) | (2,3) | (2,4) | (2,5) | (2,6) |

| 3 | (3,1) | (3,2) | (3,3) | (3,4) | (3,5) | (3,6) |

| 4 | (4,1) | (4,2) | (4,3) | (4,4) | (4,5) | (4,6) |

| 5 | (5,1) | (5,2) | (5,3) | (5,4) | (5,5) | (5,6) |

| 6 | (6,1) | (6,2) | (6,3) | (6,4) | (6,5) | (6,6) |

All 36 possible outcomes are equally likely since the die are fair.

Now suppose that, as is true for many games, you are interested in the sum of the rolls. Let denote the function that accepts an outcome and returns the associated sum of rolls.

Anytime you consider a random variable you should first specify its support. At least, we roll two ones. At most, we roll two sixes. So, .

Let’s fill in the table, replacing the outcomes, with the sum of the rolls:

| Roll | 1 | 2 | 3 | 4 | 5 | 6 |

|---|---|---|---|---|---|---|

| 1 | 2 | 3 | 4 | 5 | 6 | 7 |

| 2 | 3 | 4 | 5 | 6 | 7 | 8 |

| 3 | 4 | 5 | 6 | 7 | 8 | 9 |

| 4 | 5 | 6 | 7 | 8 | 9 | 10 |

| 5 | 6 | 7 | 8 | 9 | 10 | 11 |

| 6 | 7 | 8 | 9 | 10 | 11 | 12 |

Notice that, even though all pairs of rolls were equally likely, the number of ways the pairs can add up to some value depend on .

What’s the chance that ?

To find the chance, use probability by proportion. First, isolate all pairs of rolls that add to five. The associated collection is a level set of the function it is the collection . I’ve highlighted that set below:

| Roll | 1 | 2 | 3 | 4 | 5 | 6 |

|---|---|---|---|---|---|---|

| 1 | . | . | . | 5 | . | . |

| 2 | . | . | 5 | . | . | . |

| 3 | . | 5 | . | . | . | . |

| 4 | 5 | . | . | . | . | . |

| 5 | . | . | . | . | . | . |

| 6 | . | . | . | . | . | . |

There are four pairs of rolls that add to 5 (four outcomes in the set ) so:

We could repeat the same process for a different value. For instance, what’s the chance ?

Again, isolate the corresponding level set, and count its size. That is, count the number of ways two pairs can add to 8:

| Roll | 1 | 2 | 3 | 4 | 5 | 6 |

|---|---|---|---|---|---|---|

| 1 | . | . | . | . | . | . |

| 2 | . | . | . | . | . | . |

| 3 | . | . | . | . | . | . |

| 4 | . | . | . | . | . | 10 |

| 5 | . | . | . | . | 10 | . |

| 6 | . | . | . | 10 | . | . |

There are three pairs of rolls that add to 5 (three outcomes in the set ) so:

Repeating this process for each possible value of gives:

| Value | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Chance | 1/36 | 2/36 | 3/36 | 4/36 | 5/36 | 6/36 | 5/36 | 4/36 | 3/36 | 2/36 | 1/36 |

Even though all pairs are equally likely, not all values of the random variable are equally likely. We are twice as likely to see as , and six times more likely to see than or than . The middle values are more likely since there are more ways to pick a pair that add to 6 or to 7 or to 8 than to the extreme values like 2 or 12.

If and are continuously distributed then is also continuously distributed. Its density function is also expressed as a “sum” over the line . As usual, to move from the discrete case to the continuous case, exchange a sum with an integral:

Convolution¶

If and are independent, then their joint mass/density function factors into a product of marginals:

Factoring the joint inside of a sum over a line produces a convolution:

Convolution is an essential integral operation. It is important in many image and signal processing problems (e.g. deblurring in microscopy, astronomy, or medical imaging, and low/high-pass filtering of audio). It is the key operation behind the architecture of the convolutional neural networks widely used for computer vision, automated driving, and image classification. Convolution is also important in the theories of diffusion, a wide variety of dynamical systems, and the procedures for fast multiplication that allow computers to perform algebra quickly.

Example: Sums of Independent Exponential Random Variables¶

Suppose that and are independent, identically distributed, exponential random variables. Then and .

Let . Then, since and are nonnegative.

If , then since, if then would be negative. Therefore:

So, is a nonnegative random variable with density function:

In this case is an example of a gamma random variable.

You will iterate this analysis on your homework to find the distribution of the sum of independent, identical, exponential random variables.

Interactive¶

You can visualize convolution as follows. First, fix . Then is the function reflected ( in the argument), then translated by . So, convolving with is the same as:

Reflecting .

Translating its reflection by .

Taking the product of with the translated, reflected density .

Finding the area underneath the product by integrating over all .

Run the code cell below to experiment with the convolution of different densities. You can choose the densities and , visualize and for different , reveal their product, then compute the convolution by finding the area under the product. Repeating for all recovers the density function of .

%matplotlib inline

from utils_convolution import show_convolution

show_convolution()Sums of Squares of Random Variables¶

To estimate variances we often need to understand the distribution of sums of squares. In this chapter we’ll focus on the simple case when we add the squares of two random variables. We’ll also restrict our attention to continuous random variables.

Suppose that and are jointly distributed continuous random variables. Let denote the sum of squares. Then, the set of all such that is a circle of radius :

How is distributed?

In this case it will be easier to find the distribution of by first working out its cumulative distribution function. As always, we can find its density function by differentiating its cumulative distribution function: (see Sections 2.3 and 2.4).

By definition,

The region where is the collection of all points within a circle radius , centered at the origin. So, to find the CDF of , we will need to find the volume under the joint density over the circle of radius (see Section 8.3):

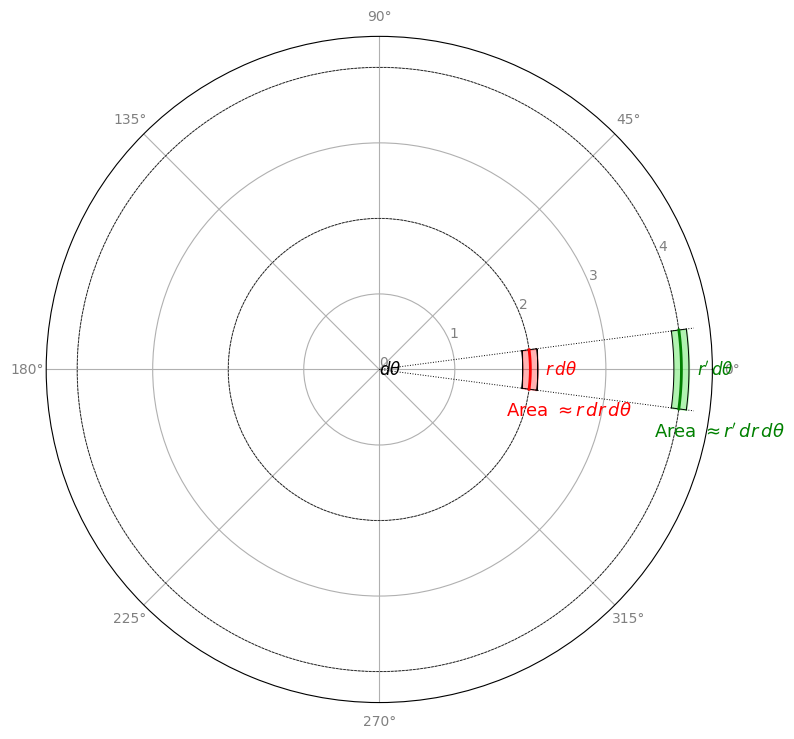

To integrate over a circular region, we will use an iterated integral in polar coordinates.

Integration in Polar Coordinates¶

Try integrating in polar coordinates if:

is more simply expressed in polar coordinates or,

if the region of integration is more naturally expressed in polar coordinates.

In particular, the chance that and are contained in a circle with radius is:

The integrand, , is usually simpler in polar coordinates if it is only a function of . If for some nonnegative function , and , then the density is rotationally symmetric.

A scalar valued function of two-inputs is rotationally symmetric if it can be expressed as a scalar valued function of the radius, . Rotationally symmetric functions have circular level-sets.

The integral of a rotationally symmetric density, , over a circular region, is:

This is a univariate integral in alone.

Example: Independent Normal Random Variables¶

Suppose that and are independent, identically distributed, standard normal random variables. Then both and are supported on with density function:

Since and are independent, the random vector, , has joint density:

where:

So, the joint density of and is rotationally symmetric!

To check our work, run the code cell below and select “Independent Normal.” You should see a bell shaped peak, with circular level sets. The surface is unchanged when you rotate it, so is rotatiopnally symmetric.

from utils_lsg import show_level_sets

show_level_sets()Let’s find the distribution of and . Along the way we’ll work out the normalizing constant for a single standard normal random variable.

Let’s find the distribution of and first. Both can be recovered from the CDF:

The CDF evaluated at is the volume under the joint density of and over the circle with radius . Integrating in polar coordinates:

To evaluate the integral, let’s integrate by change of variables (see Section 7.2). Let so that . Then:

It follows that:

for some normalization constant .

To find the normalization constant, recall that for any CDF. When diverges, diverges, so converges to zero. Therefore:

Therefore, .

So:

It follows that:

So, is a nonnegative random variable with density . In this case, is an example of a chi-squared random variable with two degrees of freedom.

To find the distribution of the sum of squares, apply the change of density formula using (see Section 7.2). Then and . Therefore:

So, the sum of squares, , is a nonnegative random variable with density proportional to an exponential function. It follows that the sum of squares is an exponential random variable with rate parameter .

Look back to the stage in this analysis where we worked out the normalization factor . This is the normalization factor for the joint density:

Since and are independent, with marginals, :

So, the normalizing constants for the marginal densities is: . Therefore, the standard normal density is normalized by:

This argument explains the factor of in the definition of the normal density.