In the last chapter we saw that double integrals and double sums can be expanded as iterated integrals and iterated sums. Since expectations are weighted averages, expectations involving two or more random variables may be expanded as multiple integrals or multiple sums. In each case, converting from a multiple integral to an iterated integral, will convert a single expectation into a pair of nested expectations.

This approach is very useful in applied problems. It breaks a problem involving two (or more) random variables into a sequence of problems, each involving only one variable at a time.

In order to understand the nested expectations, we will need to understand expectations over a single variable, fixing a different variable. An expectation given a fixed condition on some of the random inputs is a conditional expectation.

Conditional Expectation¶

Suppose that and are jointly distributed random variables and is a scalar-valued function of and . Then, the conditional expectation of is the expected value of given some constraint on the values of and/or .

Applying the division rule; conditional equals joint over marginal (see Section 1.5 and Section 8.3):

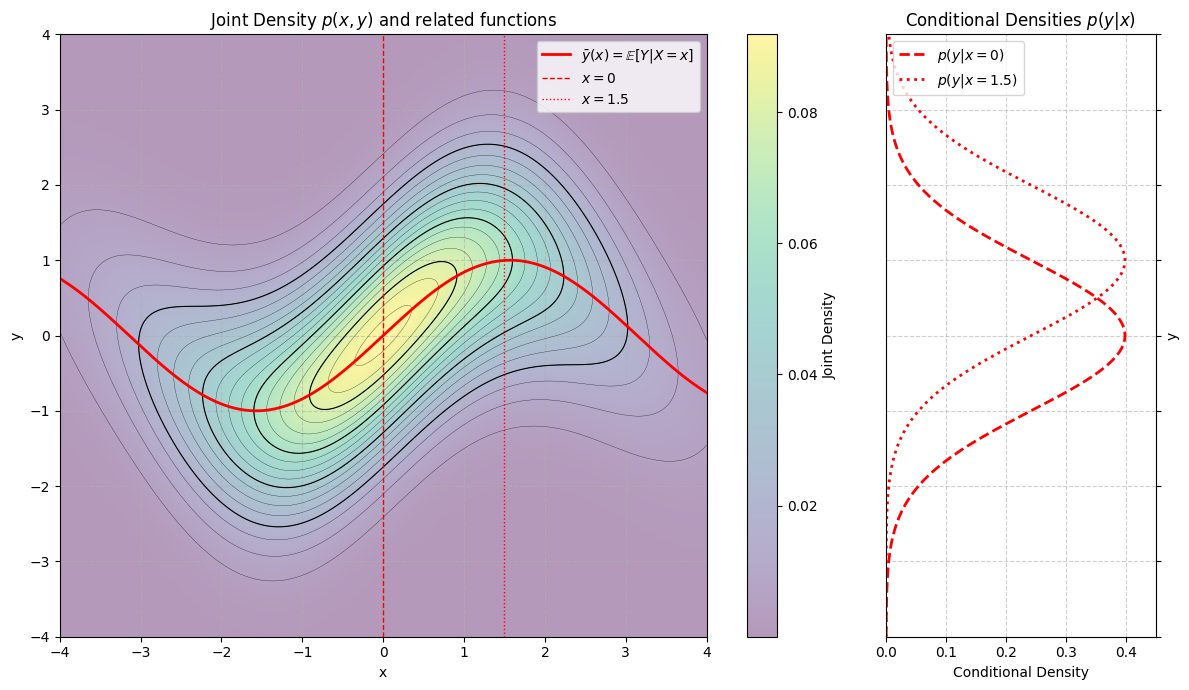

Recall that, all expectations are the center of mass of some distribution (see Section 4.1). So, we can visualize the conditional expectation of as the center of mass of the conditional distribution of given . Since conditional distributions are proportional to cross-sections of joint distributions (see Section 8.3), we can imagine conditional expectations as the center of mass of a cross-section of a joint.

The figure below shows an example joint density function as a heat map. The contours are level sets of the density. The solid red line shows , the conditional expectation of given . The vertical dashed and dotted red lines show the range of possible for and . The conditional distributions and are shown in the panel to the right. Notice that, the center of the conditionals matches the -coordinate where the solid red line intersects the vertical red lines.

Run the code cell below for an interactive example. You can vary the value of , and track how both the conditional distribution of and its expectation vary as a function of . Notice that the conditional density of is proportional to the -cross section of the joint density shown in the left-hand panel.

from utils_cond_exp import show_conditional_expectation

show_conditional_expectation()In both examples the conditional expectation of given depends on the choice of . This should not be surprising. The conditional distribution of given is proportional to the -cross-section at . The -cross-section varies depending on the choice of , so the conditional expectation of given may also vary with .

Iterated Expectation¶

Iterated expectation expresses a joint expectation over both and as an iterated expectation, first over given , then over . As usual, we can choose whether to work over on the outside and on the inside, or on the outside and on the inside.

Loosely, you can remember this law as expressing a joint average as an average of conditional averages. Or, more succinctly, as an average of averages.

Other Names

Iterated expectation is sometimes called the chain rule of expectations or the tower property of expectation. Here we are using the term iterated expectation to relate iterated expectations to the more general strategies of iterated integration and iterated summation.

Averaging Averages

You’ve probably used an iterated average before.

Suppose that a student has a 100 percent homework average, a 90 percent quiz average, and an 80 percent exam average. Suppose that their final grade is a weighted average of their component scores, with weights 20 percent homework, 30 percent quizzes, and 50 percent exams. Then their final grade is an average of averages:

Examples¶

Like the other algebraic properties of expectation, applying iterated expectation can make it much easier to compute some joint expectations. Here are some example cases:

Suppose that and are jointly distributed, where and . What is ?

SolutionApply iterated expectation:

Then, by the linearity of expectation (see Section 4.2):

Notice that, in this example, we were able to compute the expectations without evaluating a single sum or integral. We didn’t even need to know the joint distribution!

Suppose that and are drawn sequentially. Suppose that is an indicator variable. If , draw from a binomial on 100 trials with success probability . If , draw from a binomial on 100 trials with success probability . Then:

What is ?

SolutionApply iterated expectation. In general, if two variables are drawn in sequence, apply iterated expectation in the same order that you would use to sample the variables:

Plugging in:

The conditional expectations, are the expectations of binomial distributions. The expectation of a binomial equals the number of trials times the success probability. So:

Therefore:

In this case, the average of averages interpretation is quite clear. The values 20 and 60 are the conditional averages. The joint average is a weighted average of the conditional averages.

Suppose that . What is ?

SolutionThis doesn’t look like an obvious case for iterated integration. We computed this expectation in Section 7.1 using the tail sum formula (summation by parts). Recall that:

This answer makes intuitive sense. A geometric random variable represents the number of trials, in a sequence of independent and identical binary trials, up to and including the first success (see Section 2.2). The parameter was the success probability. The larger , the more likely each trial is to succeed, so the fewer trials needed, on average, before a success. If , then it will take 10 trials, on average, before the first success.

Imagine running the process that produces a geometric random variable. Before you run any trials, represents how many trials you should expect to run before stopping.

Now you run a trial. It either succeeds or fails. Let if it fails and if it succeeds. If the trial succeeds, then you stop, and . If it fails, you go on to the next trial.

So, by iterated expectation:

Substituting in the chance of success:

Since we stop immediately if we succeed, . So:

All that remains is the expected number of turns until our first sucess given that we failed on the first trial (). Since the trials are independent and identical, the expected additional number of trials needed to stop after failing on the first trial equals the expected number of trials needed to stop before we ran the first trial. In essence, everytime we fail, we start the process over.

So:

The “1” represents the first trial that failed. The remaining represents the expected number of future trials until a success. Now:

Rearrange, and solve for :

Therefore:

Independent Products¶

We can use iterated expectation to prove one last property of expectations. In Section 4.2 we worked out rules for simplifying expectations of sums. We can now simplify expectations of some (not all) products:

Proof

You will complete this proof on your homework using iterated integration. Here’s the start.

The proof follows from the product rule for iterated integrals and sums. As usual, we can prove the result in either the continuous or discrete case since the rules for iterated integrals and sums are the same. In the continuous case:

Next, use the multiplication rule for independent random variables to expand the joint density (see Section 8.3):

Finally, apply iterated integration.

To complete the proof using iterated expectation, write:

If and are independent, then the conditional distribution of given is the marginal distribution of , no matter . Therefore:

Let

Now:

The quantity, , does not depend on , so, by the linearity of expectation:

Therefore: